04/23/2026 - Articles

Return on Digital Investment: How to Make the Value of Digitalization Measurable

Digitalization costs time, budget, energy, and organizational attention. Introducing new software, adapting processes, training employees. And yet, at some point, the questions inevitably arise: What does it actually deliver in the end? Can you prove that it was worth it? This is exactly where the concept of return on digital investment comes in. It describes the measurable value that digital initiatives actually create. Not just in euros, but also in terms of time savings, fewer errors, better planning, and faster decision-making.

In this article, you will learn what the return on digital investment really means, why traditional ROI perspectives often fall short, and why the topic is becoming increasingly important, especially in the public sector. You will get a simple measurement logic, a KPI toolkit, and a step-by-step process for building return on digital investment systematically. Finally, using BCS as an example, we will show how projects, resources, and controlling can be connected in a way that makes value truly visible in day-to-day operations.

Digitalrendite auf einen Blick

Digitalization alone does not create value—only measurable impact creates a return on digital investment.

Traditional ROI falls short: return on digital investment also takes into account time savings, quality, transparency, and the ability to steer and control operations.

Impact becomes manageable when a baseline, clear KPIs, and a structured impact logic (input → outcome → impact) are defined.

In the public sector, “return” means public value—not profit, but better services and greater capacity to act.

End-to-end coordination of projects, resources, and controlling is the key lever for sustainably realizing return on digital investment.

Contents

What is return on digital investment?

Definition of return on digital investment

Return on digital investment is the measurable benefit of digital initiatives minus their cost, across both financial and non-financial effects.

At first glance, this definition may seem simple. In practice, however, it represents a fundamental shift in perspective. While traditional economic evaluations focus almost exclusively on costs and monetary returns, return on digital investment also takes into account effects that cannot be directly expressed in euros but still have a tangible impact on an organization’s day-to-day operations.

These include, among others:

reduced processing time

lower error rates

improved planning reliability

higher service quality

greater transparency and control

Return on digital investment is not a replacement for traditional financial metrics such as ROI (Return on Investment) or TCO (Total Cost of Ownership), but rather an extension. While ROI looks at cash flows and TCO describes total costs, return on digital investment focuses on the impact of digital initiatives in everyday operations.

It therefore answers a different question: not “Does the investment pay off financially?” but “What measurable changes occur within the organizational system as a result of the initiative?”

ROI vs. return on digital investment

Digitalization rarely affects just a single point—it typically impacts entire processes. Return on digital investment makes this impact visible where traditional ROI remains blind.

| ROI | Return on digital investment |

| monetary | monetary and non-monetary |

| point-specific | cross-process |

| retrospective | also forward-looking and for management |

| focus on investment | focus on impact |

Especially in complex digital projects—for example in project-based work, services, or public administration—ROI alone is often not a sufficient metric. The comparison is intentionally simplified. In practice, both perspectives complement each other:

- ROI remains relevant for investment decisions

- Return on digital investment complements operational management

Problems arise when the effects of return on digital investment are translated into monetary values across the board without making the underlying assumptions transparent (e.g., time savings without actual capacity adjustments).

Digitalization vs. digitalization success

Another common misconception is equating digitalization with success.

| The following describes activities/facts | What matters is the benefit / measurable impact |

| We introduced a new tool. | Processing times have decreased. |

| The process is now digital. | Errors have been reduced. |

| The data is stored in the system. | Decisions are made faster and on a more informed basis. |

Key takeaway

Digitalization is the prerequisite, return on digital investment is the result.

Common misconceptions about return on digital investment

| Misconception | What it really means |

| “We implemented software, so we’ve realized value.” | Software alone does not change reality. Measurable value only emerges when processes are adapted, roles are clarified, and usage is established. |

| “More data automatically leads to better decisions.” | Without clear questions and clean data, numbers mainly create confusion and complexity. Return on digital investment does not come from data collection, but from information that is relevant for decision-making and management. |

| “The benefits will show up eventually.” | Without clear targets and measurement points, benefits remain vague and cannot be managed. |

Why return on digital investment becomes a management tool instead of just a buzzword

Three key factors explain why many organizations can no longer ignore return on digital investment:

1. Ongoing budget pressure

Whether in companies or public organizations, investments must be justified, prioritized, and defended. If no one can see the benefits, digitalization quickly becomes an ongoing internal debate.

2. Talent shortages as a structural issue

When staff is limited, processes must become more efficient. Digitalization is expected to provide relief—but whether it actually does is only revealed through return on digital investment.

3. Rising expectations for service quality

Customers, clients, and citizens expect digital services that work, are easy to understand, and save time. These expectations can only be met if impact is actively managed.

Return on digital investment as a common language within the organization

An often underestimated advantage: return on digital investment connects perspectives.

It creates a shared basis for evaluation across:

management and executives

business units and project owners

IT and organizational functions

controlling and strategy

Instead of abstract discussions about “digitalization,” the focus shifts to concrete questions:

Which initiative delivers the greatest value?

Where should we allocate our limited resources?

Which effects are already measurable—and which are not?

Return on digital investment makes decisions comparable, transparent, and justifiable.

What happens without return on digital investment

If this management logic is missing, similar symptoms appear in many organizations:

Project graveyard: Initiatives are launched but not consistently used or further developed.

Shadow IT: Business units resort to their own solutions because central systems do not deliver noticeable value.

Low adoption: Employees experience digitalization as an additional burden rather than as support.

Persistent media breaks: Digital islands do not replace end-to-end processes.

Without return on digital investment, digitalization becomes an end in itself. With return on digital investment, it becomes manageable.

Return on digital investment in companies: where value is typically created

Return on digital investment becomes particularly visible in companies where digitalization connects operational processes with management and transparency. Four value drivers are especially relevant.

Value driver 1: Revenue & margin

Digitalization has an indirect but lasting impact here:

faster proposal and sales processes

better tracking of opportunities and projects

shorter lead times to service delivery

higher utilization of teams and resources

Result: Services can be billed more quickly, projects become more economically predictable, and margins become more stable.

Value driver 2: Costs & efficiency

A classic but often underestimated lever:

automation of manual tasks

fewer errors through clear workflows

reduction of rework and duplicate data entry

shorter processing and coordination times

Especially for project-based service providers, this quickly leads to noticeable time savings that translate directly into higher productivity.

Value driver 3: Management & forecasting capability

Return on digital investment becomes especially visible at the management level:

reliable resource planning

ongoing project controlling

realistic forecasts instead of gut feeling

improved cash flow visibility through early transparency

Here, return on digital investment means fewer surprises, greater predictability, and better decisions.

Value driver 4: Risk reduction & compliance

Often not a primary goal, but a critical effect:

clear documentation of projects and decisions

higher auditability and compliance reliability

better contract fulfillment

reliable post-calculations as a basis for learning

These effects reduce risks, avoid follow-up costs, and strengthen organizational resilience.

Why return on digital investment is especially relevant in the public sector

In the public sector, digitalization is under particular pressure to deliver results. On the one hand, administrations are expected to become more modern, faster, and more citizen-centric. On the other hand, budgets are limited, legal requirements are strict, and changes are politically and organizationally sensitive. It is precisely in this tension that return on digital investment provides a simple guiding question: What has actually improved?

Unlike in businesses, the success of digital initiatives here cannot simply be measured in terms of revenue or profit. Nevertheless—and precisely for that reason—it must be determined whether and how digitalization is truly effective. Return on digital investment provides a framework that makes impact visible without forcing a profit-driven logic.

“Return” without profit logic: public value instead of profit

When “return” is discussed in the public sector, it does not refer to financial surplus. The real return lies in societal benefit—how effectively government action fulfills its purpose. Digitalization should help make services more understandable, accessible, and reliable. The “return” of public-sector digitalization is therefore reflected not in margins, but in public value.

This value unfolds on multiple levels:

Citizens and businesses benefit from clear, fast, and reliable services.

Employees are relieved through clearer processes, better tools, and fewer media breaks.

The state gains in its capacity to act, transparency, and trust.

Instead of asking, “What is the financial return?” the key question becomes: “What impact do we achieve with the resources we use?”

Return on digital investment translates this public value into a structured perspective: not whether something has been digitalized, but what has actually improved as a result.

Characteristics of the public administration context

Measuring and managing return on digital investment is more challenging in the public sector than in many companies. There are structural reasons for this:

Legal frameworks limit flexibility and slow down adjustments.

Budget structures separate investment and operating costs, making holistic evaluation more difficult.

Procurement processes extend implementation timelines.

Federal structures lead to varying responsibilities, standards, and IT landscapes.

Necessary standardization often conflicts with specific functional requirements.

In this environment, return on digital investment helps reduce complexity without ignoring it. It forces a focus on impact and value rather than solely on measures, programs, or resources used.

Where return on digital investment becomes visible in public administration

The benefits of digital initiatives in public administration are rarely spectacular, but they are clearly visible in everyday operations. They often arise from many small improvements along a process, which together create significant impact. When applications no longer need to be printed, passed around, and manually entered, processing times decrease. When data is consistent, employees can provide information more quickly. When processes are clearly structured, the number of follow-up questions decreases—both internally and externally. This is where return on digital investment emerges: through less friction, greater reliability, and better predictability.

Typical effects include:

fewer media breaks between online services and internal processing

shorter processing and turnaround times

improved ability to provide information to citizens and political stakeholders

lower rates of follow-up inquiries due to clearer processes and data

Individually, these effects may seem small. Taken together, however, they lead to noticeable relief, higher quality, and better manageability—i.e., real return on digital investment.

Adoption as a return driver

One key point is often overlooked in digitalization projects: impact only occurs where solutions are actually used. A technically functioning online service that no one uses generates no return on digital investment.

Usability is therefore not a “soft” factor, but a critical success driver. The more understandable, accessible, and reliable a digital offering is, the higher its adoption. And the higher the adoption, the greater the actual relief for the organization and its employees.

More usage leads to more impact—and higher return on digital investment.

In this sense, adoption acts as a multiplier: it determines whether digitalization truly takes hold in everyday operations—or remains ineffective.

The measurement challenge: how to properly measure return on digital investment

As compelling as the concept of return on digital investment is, it often fails in practice. Not because impact cannot be measured in principle, but because measurement is often done too late, is too complex, or lacks clear objectives. The key is simple: define the impact first, then measure.

From input to impact: measuring impact instead of activities

Many digital projects remain stuck at the input level: budget, project duration, number of implemented features. However, these metrics say little about whether anything has actually improved.

An impact-oriented approach distinguishes between what was invested, what was delivered, and what actually changed. Only when it becomes clear what effects a measure produces in day-to-day operations—such as time savings, fewer errors, or better control—can we speak of return on digital investment.

A multi-level model has proven effective in clearly separating cause and effect:

Input: resources used (budget, time, personnel, software)

Output: delivered results (e.g., digitized applications, automated processes)

Outcome: direct effects (time savings, lower error rates, improved control)

Impact: broader effects (service quality, satisfaction, trust, efficiency)

Return on digital investment arises primarily at the outcome and impact levels—not from output alone. Importantly, not every effect needs to immediately result in broader societal impact. Often, it is sufficient to make tangible improvements at the process level visible.

Causality and influencing factors

A key methodological challenge is attributing observed effects clearly to digital measures. Changes in processing times, error rates, or service quality can also be influenced by other factors, such as:

organizational restructuring

personnel changes or learning curve effects

seasonal fluctuations in workload

parallel optimization initiatives

Measurement of return on digital investment should at least qualitatively account for these factors. The goal is not perfect causality, but a plausible and consistent interpretation of impact.

Baseline and comparison: no statement without a starting point

A common mistake is to think about measurement only after a system has been implemented. At that point, there is no basis for comparison. Without a baseline, it remains unclear whether anything has improved—or whether it is just a perception.

It is therefore essential to define simple comparison points early on. These may include processing times before and after implementation, pilot areas compared to regular operations, or clearly defined process steps. The goal is not scientific perfection, but a traceable development.

Suitable approaches include:

before-and-after comparisons

pilot projects with clearly defined scope

process time measurements along defined steps

comparison of similar organizational units (where possible)

Consistency and traceability matter more than statistical perfection.

For example, if the processing time of an application process is measured, the baseline should be collected over several weeks or months. Single measurements are prone to distortion. Only when comparison periods are consistently defined (same case types, same process definitions) can reliable conclusions be drawn about changes.

Monetary vs. non-monetary—and how to combine both

Not every benefit can or should be expressed in monetary terms. Time savings can be monetized, but qualitative metrics often provide a more realistic picture. Satisfaction, transparency, or predictability are difficult to price, yet crucial for actual impact.

A solid return-on-digital-investment approach therefore combines both: where appropriate, effects are quantified; where this is not possible or meaningful, qualitative indicators are added. What matters is not the exact number, but the direction and reliability of the insight.

Time savings can optionally be monetized (e.g., hours × cost rate)

Quality and satisfaction metrics complement the picture

Management KPIs show trends, not absolute truths

The goal is not a precise figure, but a robust basis for decision-making.

A reduction in processing time of 10 minutes across 15,000 cases per year corresponds to 2,500 hours in total. The key question, however, is how this is interpreted: Is this time actually saved (e.g., through staff reduction)? Or does it create additional capacity for other tasks? Only in the first case is there a direct financial effect. In the second, it is an efficiency gain without immediate cost impact—but with the potential for higher output or better service quality.

Common measurement pitfalls—and how to avoid them

In practice, measurement rarely fails due to lack of intent, but due to structural issues:

- Over-measurement: too many KPIs create opacity instead of clarity

- Lack of consistency: KPIs are defined differently over time

- Incentive distortion (“gaming”): metrics are optimized without real improvement

- Measurement without use: data is collected but not used for management

Sound measurement of return on digital investment therefore requires not many metrics, but a few well-defined, consistently measured, and actively used control indicators.

Return on digital investment therefore requires

clear objectives

simple logic

honest interpretation

Limits of return on digital investment

Despite its benefits, return on digital investment is not a silver bullet.

Not all effects can be meaningfully measured or clearly attributed

The effort required for measurement may exceed the benefit

Long-term effects (e.g., cultural change) are difficult to assess in the short term

There is also a risk that only measurable effects are prioritized, while harder-to-quantify but strategically important aspects are neglected.

Return on digital investment should therefore be understood as a decision-support tool—not as the sole evaluation framework.

KPI toolkit: metrics for return on digital investment (business & public sector)

Return on digital investment is not a one-time set of numbers that you create and then check off. It is a framework for thinking and management that helps make digitalization effective. Fewer lists, less symbolic activity, and more focus on what actually improves in day-to-day operations.

KPIs are not an end in themselves. They are tools to make developments visible and to support decision-making. That is why there is no single “right” metric for return on digital investment.

Instead, a modular toolkit is useful—one from which organizations can choose depending on their objectives. In practice, four perspectives have proven effective.

Important upfront

The KPIs listed below are reference values derived from practical projects, not standards. What matters is the comparison of before vs. after—not the absolute value.

They should be understood as a practical toolkit. The key is not to track as many KPIs as possible, but to select a few clearly defined and consistently measured metrics.

Reference values do not replace a baseline. Reliable insights only emerge from comparing before and after under consistent measurement definitions, comparable case types, and a transparent data foundation.

KPI toolkit: metrics for return on digital investment

This is where it becomes visible whether digitalization actually simplifies workflows. If lead times decrease, manual handovers are eliminated, or follow-up inquiries decline, that is a clear signal of impact. These KPIs are especially tangible because they connect directly to day-to-day work.

Methodological note: Process KPIs are only reliably comparable if the same process boundaries apply. For example, if “application receipt” or “completion” is defined differently over time, the figures lose their explanatory value.

- Typical effects of digitalization: -20% to -50%

- In highly manual processes (applications, approvals, project coordination), reductions of up to -60% are also achievable.

- Example: An application that used to take 10 days now takes 4–6 days after digitalization.

- Typical starting point in many organizations: 10–30%

- Realistic target after process digitalization: 40–70%, depending on complexity

- What matters is not full automation, but the reduction of manual handovers.

- Before digitalization often: 30–50% of cases with at least one follow-up inquiry

- After standardization and clear digital forms: below 15–20%

- Every avoided follow-up inquiry saves time on both sides.

- Typical reduction: -20% to -40%

- Especially relevant in the case of media breaks and manual data entry.

- Often high in historically evolved processes

- The goal is a significant reduction in manual transfers between systems, files, or paper and system

- Especially relevant for administrative and project processes with many handovers

Especially for project-based service providers, the economic perspective plays a central role. Utilization, planning accuracy, or deviations between calculation and reality show whether digitalization helps manage services better and identify risks earlier.

Methodological note: Financial and management KPIs become more meaningful when planning values are not “smoothed” retrospectively. Only with a stable baseline do plan/actual deviations and forecasts become truly relevant for management.

- Common starting point: 60–70%

- Good, realistic target values: 75–85%

- Anything above that increases the risk of overload and loss of quality.

- Before structured project and service tracking: 50–65%

- After introducing clear project and time-tracking logic: 70–80%

- Typical deviation without clean management: ±20–30%

- With ongoing project controlling: ±5–10%

- An enormous lever for planning reliability.

- Target value: below 10%, long term below 5%

- A prerequisite for learning systematically from projects.

- Reduction through clean service records and billing: -10 to -20 days is realistic.

- Typical without end-to-end project management: significant fluctuations between plan and reality

- The goal is not zero deviation, but early visibility and better manageability

- Especially relevant in multi-project and portfolio contexts

In the public sector, usage is a decisive indicator. Digital offerings only create value if they are actually adopted. Completion rates, drop-offs, or first-contact resolution rates indicate whether services are understandable and practical.

Methodological note: Service KPIs should not be interpreted in isolation. High usage is only a success if quality, clarity, and processability are also ensured in the background.

- Many digital public services start at 30–50%

- Well-designed, understandable services reach 70–90%

- Usage is the most important impact indicator.

- Critical threshold: >30%

- Target range: below 15–20%

- Anything above that points to clarity or media-break problems.

- Before digitalization: often 50–60%

- After clear processes and data availability: 75–85%

- The goal is less the absolute rating than the improvement.

- Typical effects: +0.5 to +1.0 grade level or +15–25% in score

- Shows whether digital offerings actually relieve analogue or manual channels

- Especially relevant for omnichannel services or public services with a paper alternative

- A central lever for public value and internal relief

Last but not least, digitalization also has an internal impact. When onboarding times decrease, coordination effort declines, or transparency increases, long-term relief is created. These effects can often be captured well through targeted surveys or simple indicators.

Methodological note: Organizational and employee KPIs are often indirect. Precisely for that reason, they should not be dismissed as “soft” metrics, but collected through standardized questions, fixed intervals, and consistent evaluation.

- Reduction of 20–40% with clear, standardized workflows

- Common baseline: 40–60% agreement

- After structured project and resource management: 70–85%

- Reduction of 15–30% is realistic

- Fewer status meetings because information is available.

- Especially relevant for project, resource, and controlling data

- Typical quick win after standardization: significantly fewer incomplete or contradictory records

- Basic prerequisite for reliable return-on-digital-investment measurement

- A central early indicator of later return on digital investment

- Shows whether processes are truly being lived in the system or whether shadow solutions remain in place

- Especially important in implementation and pilot phases

Return on digital investment is not reflected in isolated ideal values, but in consistent improvements across multiple metrics. When several KPIs move in the right direction at the same time (faster, more stable, more predictable, more understandable), this creates robust return on digital investment.

The practical process in six steps: systematically planning and realizing return on digital investment

Digital initiatives without a clear plan often lead to measurement without insight and implementation without impact. To systematically realize return on digital investment, a structured and transparent process is required—one that ranges from defining benefit hypotheses to continuous improvement, based on established approaches from digitalization and impact-oriented frameworks (e.g., the input–output–outcome–impact model, as also described by the German DigitalService).

Step 1: Define objectives and benefit hypotheses

The starting point is not the technology, but the question of value: How do you know that digitalization is actually creating impact?

A typical formulation might be: “After introducing the digital solution, the average processing time decreases by at least 40% compared to today.” Such goals do not just define a direction—they make assumptions about impact measurable and testable. This approach is also emphasized in practical impact frameworks (e.g. measurement goals in the DigitalService impact model).

Key elements include:

a clear, operational description of the objective

a benefit hypothesis that is directly measurable

a defined target value and timeframe (“by the end of 2026 …”)

If these hypotheses are clearly defined from the outset, they support the entire management and steering of the project. It is important that benefit hypotheses are falsifiable. A statement like “processes will become more efficient” cannot be verified.

More effective are concrete target values with a time reference—targets that can actually be missed.

Step 2: Select processes with the highest leverage

Not every process is suitable as an initial pilot. Choose those processes where high volumes, significant pain points, and frequent media breaks come together. These criteria help create focus and ensure that limited transformation capacity is used where the return is highest.

Examples of high-impact processes:

high-volume application handling

service processes with many manual handovers

compliance or reporting processes that currently generate many follow-up inquiries

This also highlights that digitalization is not about technology itself, but about improving processes and significantly reducing friction between people and systems.

Step 3: Measurement design (baseline, data sources, frequency)

Anyone who measures must know when, what, and how often to measure. A solid measurement design includes the following elements:

defining a baseline: what was the state before the initiative?

identifying data sources: usage systems, log files, timestamps, employee surveys

determining the measurement frequency: weekly, monthly, or quarterly

The challenge here is less about technology and more about the discipline of collecting data consistently and in a comparable way.

Example: If you measure processing times, you need a baseline over several weeks before implementation—only then can changes be meaningfully interpreted.

A common issue in practice is defining KPIs too late. If measurement only begins after implementation, the basis for comparison is missing. Measurement design is therefore an integral part of project planning—not a downstream step.

Step 4: Implementation in iterations

Instead of a “big bang” approach, an incremental model is recommended:

- Minimum Viable Product (MVP): the smallest deliverable unit of value

- Pilot phase: for a limited group and short period

- Scaling and standardization: after a successful pilot

An iterative process enables fast feedback, early measurement, and smart adjustments—before large budgets are committed.

Step 5: Adoption & change as a mandatory component

Digitalization projects rarely fail due to technology—they fail due to lack of adoption in everyday use. Training, clear roles, communication, and support are just as important as technical development.

In practice, especially in public administrations and large service organizations, digital solutions are often formally introduced but not actually used if there is no structured change management. This is a classic barrier to realizing return on digital investment.

People need to understand why a change benefits them—and they need to be enabled to apply it.

Step 6: Controlling & continuous improvement

After implementation comes improvement. A regular review cycle (e.g. monthly or quarterly) creates continuous learning and adjustment loops:

What do the measurements show?

Which hypotheses have been confirmed?

Where is adjustment needed?

“Lessons learned” thus becomes not a closing remark, but a recurring practice. Consistent controlling ensures that return on digital investment is not only realized at the end, but continuously.

How business coordination software can support return on digital investment

Up to this point, the focus has been on models, impact logic, and KPIs. In practice, however, the key question is how to actually realize return on digital investment in day-to-day operations. This is exactly where many organizations struggle: the objectives are defined, the impact is meant to be measured—but the operational implementation remains fragmented. The bottleneck is often not the lack of individual tools, but the lack of coordination across projects, resources, services, and decisions.

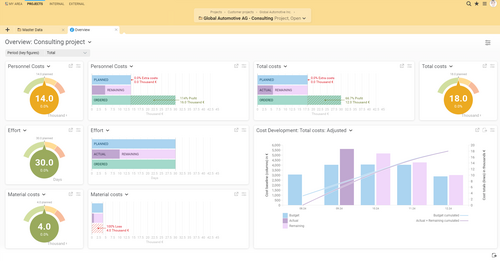

This coordination is one of the biggest—and often underestimated—levers for achieving return on digital investment. Integrated systems such as business coordination software address this by not only capturing information, but bringing it together into a unified management context. BCS can serve as an example here.

Potential return effects of such systems

Where systems like BCS deliver impact can be illustrated across several typical functional areas:

Project planning and control: Standardized templates, milestones, and tracking of risks and issues create comparable and reliable project structures. This reduces surprises and improves the manageability of ongoing initiatives.

Resource planning and utilization management: Transparency regarding capacities, skills, and availability helps identify bottlenecks earlier, avoid overload, and manage utilization more effectively. This improves predictability and forecasting capability.

Time tracking and service records: When time and services are recorded directly within the project context, post-processing effort decreases. At the same time, the quality of billing, post-calculation, and financial analysis improves.

End-to-end integration of proposals, budgets, and controlling: A consistent data flow from proposal through project to evaluation reduces media breaks and interpretation effort. Information no longer needs to be transferred or explained multiple times.

Reporting and management dashboards: Up-to-date, integrated data replaces manual consolidation and shortens decision-making paths. Transparency thus becomes not an end in itself, but the foundation for forward-looking management.

Return on digital investment for project-based service providers

The benefits of integrated systems become particularly clear in project-based service organizations—such as IT, consulting, engineering, or agencies. Here, economic success depends less on individual products and more on the ability to deliver projects reliably, predictably, and efficiently.

Using BCS as an example, it becomes clear how such systems can support the entire project lifecycle: planning is based on standardized structures, experience is reused through templates and standards, and risks and issues are tracked in a structured way rather than informally. This reduces uncertainty and improves the quality of project management.

A key lever lies in resource planning. Instead of isolated team or departmental views, an organization-wide perspective on capacities, skills, and availability is created. This makes bottlenecks visible earlier, clarifies priorities, and enables more reliable project planning.

Return on digital investment also becomes very tangible in time tracking, service documentation, and post-calculation. When services are recorded, evaluated, and further processed within the same system context, corrections decrease, billing cycles shorten, and financial analyses become more reliable.

Return on digital investment in the public sector: coordination, transparency, and manageability

In the public sector, the focus is often different. Here, the emphasis is less on margins and more on coordination, traceability, and the ability to act in complex initiatives. Systems like BCS do not replace specialized administrative systems, nor do they map public services in full detail. Their strength lies in bringing together programs, projects, responsibilities, and resources across organizational units.

Large initiatives such as OZG implementations, register modernization, or cross-departmental digitalization programs consist of many interlinked projects. Responsibilities are distributed, timelines overlap, and resources are limited. In this complex environment, there is often a lack of a consistent overall view of where projects actually stand, where delays occur, and which dependencies become critical.

Online Access Act (OZG)

Goal: provide digital public services (for citizens & businesses)

Adopted: 2017

Original deadline: end of 2022

Scope: approx. 575 public services

Implementation by: federal, state, and local governments

Core idea: “one for all” (EfA principle – one solution can be reused)

Access: via government portals (e.g. citizen portals)

Key components: online applications, user accounts, digital identification

Status: partially implemented, ongoing expansion (OZG 2.0)

Integrated coordination systems create a shared management layer. Project and portfolio status can be displayed consistently, budgets can be tracked in a comparable way, and priorities can be made transparent. Return on digital investment then appears not primarily as short-term cost savings, but as improved ability to act and make decisions.

In addition, there is traceability of decisions. In many organizations, decisions, changes, and justifications are scattered across emails, presentations, shared drives, and personal storage. When this information is documented in a structured way within the project context, it increases transparency, auditability, and reliability in day-to-day operations.

Another key lever is the coordination of external service providers. Progress, approvals, as well as time and cost developments can be represented within integrated systems in the same context as internal project information. External contributions are therefore not viewed in isolation, but can be managed as part of the overall initiative.

Prerequisites: when integration actually creates return on digital investment

As effective as integrated systems can be, their benefit is not automatic. The described effects require that

data is captured consistently and completely

processes are sufficiently standardized

roles and responsibilities are clearly defined

usage is consistently embedded in day-to-day operations

If these prerequisites are not met, no return on digital investment is created. In the worst case, existing complexity is merely shifted into a central system. Measurable value therefore does not arise from the software alone, but from the interaction of system, process design, governance, and usage.

Implementation blueprint: how to start with BCS toward return on digital investment (instead of “tool first”)

A common mistake in digitalization initiatives is trying to address too many objectives at once. It is more effective to start with a clearly defined leverage area, make value visible early, and integrate measurement from the very beginning.

An excellent example of this is provided by the case study of ECKD GmbH.

Jana Kurik, PMO bei ECKD

The implementation of the new project management software took place … in a three-month pilot phase. During this time, we were able to test various functions in daily operations and evaluate which adjustments were suitable for our way of working.

What happened here is exactly the right approach: start small, use, measure. ECKD focused in particular on project planning, time tracking, and controlling. This combination delivers very concrete KPIs, such as plan/actual deviations, actual time tracking per project, or a consolidated view of project progress—without becoming overly complex.

A second use case in which BCS creates measurable impact is shown in the case study of gematik GmbH. The challenge there was to plan and evaluate resources consistently across a matrix organization:

Mirko Richter, People Analyst at gematik GmbH

Resource planning was a challenge under the existing conditions … Therefore, we decided to introduce a cross-functional solution that could provide our project managers and line managers with greater transparency regarding the utilization of their employees.

The case studies of ECKD and gematik illustrate two typical starting points:

At ECKD, the focus was on project planning, time tracking, and controlling—a combination that quickly makes concrete KPIs visible, such as plan/actual deviations, time effort, and project progress.

At gematik, the focus was on resource planning and portfolio reporting—providing transparency on utilization, bottlenecks, and priorities within a matrix organization.

From this, two effective entry patterns can be derived:

project controlling + time tracking + reporting

portfolio reporting + resource planning

In the short term, quick wins often arise through better transparency, reduced search effort, and more consistent reporting. In the long term, the real leverage comes from standardization, more reliable forecasts, improved post-calculations, and greater management capability.

Data model and governance as prerequisites for return

An integrated system only becomes effective when data is consistent, comparable, and complete. The data model and standards are therefore not secondary concerns, but a strategic prerequisite. Organizations must define:

what constitutes a project,

how status values are interpreted,

which roles and permissions apply, and

which KPIs should be measured.

Governance is equally important:

Who is responsible for data quality?

Who reviews the metrics?

Who takes corrective action when usage, predictability, or transparency fall short of expectations?

Only with clear responsibilities, regular review cycles, and feedback loops does system usage translate into real, manageable impact.

When such an approach is particularly worthwhile

Integrated coordination approaches are especially valuable when coordination itself has become a bottleneck. Typical indicators include:

many parallel projects with personnel or functional dependencies

high coordination effort between departments or external stakeholders

information scattered across multiple systems or Excel files

time-consuming, manual reporting

low transparency regarding utilization, priorities, and overall status

The more pronounced these symptoms are, the more likely an integrated approach can become the foundation for measurable return on digital investment.

Checklist & decision guide: when is the BCS approach particularly worthwhile?

Not every organization needs a business coordination software right away. The use of BCS becomes worthwhile when coordination turns into a bottleneck and transparency can no longer be achieved with reasonable effort. The following checklist helps to realistically assess your own environment.

The more of the following points you answer with "Yes," the more strongly you are likely to benefit from BCS:

Quick wins vs. long-term benefits: what you can realistically expect

A common misconception is that return on digital investment must be visible immediately across all areas. In practice, effects tend to appear in two waves.

Short term, quick wins primarily arise from improved transparency. Project status, resource overviews, and consistent reporting reduce search and coordination effort almost immediately. These effects are important because they build trust and increase acceptance.

Long term, the real leverage unfolds. Standardized project structures, reliable forecasts, improved post-calculations, and higher quality in planning and execution do not emerge overnight, but through consistent use and continuous improvement. This is where transparency turns into real management capability.

Both go hand in hand: quick wins secure the initial momentum, while long-term benefits justify the investment.

FAQ: frequently asked questions about return on digital investment

What is the difference between return on digital investment and ROI?

ROI primarily looks at financial returns relative to an investment. Return on digital investment goes further. It also considers non-monetary effects such as time savings, quality, transparency, manageability, or adoption. Especially in digital initiatives—particularly in the public sector—these effects are often more important than a traditional financial return.

Which KPIs are the “most important”?

There are no universally “correct” KPIs. What matters is that KPIs fit the objective. For operational processes, cycle times or inquiry rates are useful; for project-based organizations, utilization, forecast accuracy, or post-calculation deviations; for public administration, usage and completion rates of digital services. The number of KPIs is less important than their relevance for management and decision-making.

How can non-monetary benefits be measured reliably?

Non-monetary benefits are often made visible through comparisons: before/after analyses, trend developments, or standardized surveys. Time savings, predictability, transparency, or satisfaction can be measured reliably even without converting them into monetary values. The goal is not exact pricing, but a credible statement about impact.

How quickly can effects be observed?

This strongly depends on the starting point and scope. Initial effects often appear where transparency increases, such as in project status or resource overviews. Sustainable effects—like better forecasts or higher quality—take more time because they rely on learning and standardization processes. Clear goals, a defined baseline, and consistent use are crucial.

Is return on digital investment possible without new software?

In principle, yes. Process improvements, clear responsibilities, or better goal definitions can also create impact. However, in complex environments this approach quickly reaches its limits. Without integrated systems, measurement remains effortful and management fragmented. Software is not an end in itself, but often necessary for sustainable return on digital investment.

How does business coordination software support return on digital investment?

Business coordination software connects project management with resource planning, time and service data, controlling, and reporting in a consistent system. The focus is not on individual projects, but on their interaction. This coordination is what makes the difference and creates the foundation for measurable return on digital investment.

Return on digital investment at a glance: 5 takeaways

Digitalization is activity. Return on digital investment is the result.

Without a measurement logic, value remains a claim.

Impact does not come from tools, but from manageability.

Public value is the return of public administration.

Coordination is the greatest—and often underestimated—lever for return.

About the author

Kai Sulkowski is an editor in the marketing department of Projektron GmbH. In his professional articles, he focuses on topics at the intersection of digitalization, project management, and corporate management. For this article, he has outlined how organizations can make the value of digital initiatives measurable—from KPIs and impact logic to the question of how transparency, resource management, and controlling contribute to return on digital investment.

Further reading recommendations & sources

[1] German DigitalService: “Counting what counts: Why we should look at return on digital investment”,https://digitalservice.bund.de/blog/zaehlen-was-zaehlt-warum-wir-uns-die-digitalrendite-anschauen-sollten, accessed March 2026.↩

[2] eGovernment.de: Topic focus “Return on digital investment” and “impact orientation”,https://www.egovernment.de, accessed March 2026.↩

[3] Initiative D21 / Federal Ministry of the Interior (BMI): eGovernment Monitor,https://initiatived21.de/publikationen/egovernment-monitor/, accessed March 2026.↩

[4] Bitkom: Studies on digital public administration,https://www.bitkom.org/Themen/Oeffentliche-Verwaltung, accessed March 2026.↩

[5] National Regulatory Control Council (NKR): Reports and statements on e-government and digitalization,https://www.normenkontrollrat.bund.de, accessed March 2026.↩

Further interesting articles on the Projektron blog

Selecting PM software

If your SME or corporation is about to select project management software, you may not know where to start. This guide helps you navigate the PM software market and leads you to the right decision in 9 steps.

PM software comparison

Get an up-to-date overview: we compare 15 of the most popular and best project management software solutions. Start here, explore the market, and compare for yourself.

Software implementation

Implementing enterprise software is complex. What strategies are available? Which approach suits which purpose? With this knowledge, you can successfully deliver your implementation project.

7 benefits of PM software

From improved spreadsheets to comprehensive business coordination software: what advantages does project management software offer, and who benefits from it? We present 7 strong reasons why your organization can benefit.